How to Ramp Agent Autonomy Responsibly

The instinct when first running an AI agent is to give it everything it needs to complete the task. Full tool access, a reasonable budget, and a clear instruction. Then watch what happens.

What happens is sometimes perfect. And sometimes it’s a retry loop, a misinterpreted instruction, or an action that was technically correct but not what you meant. The first time it happens with a small task, it’s a learning experience. The first time it happens with a $500 budget and write access to your email — it’s an incident.

The answer isn’t to distrust agents. It’s to earn trust incrementally, the same way you would with any new system.

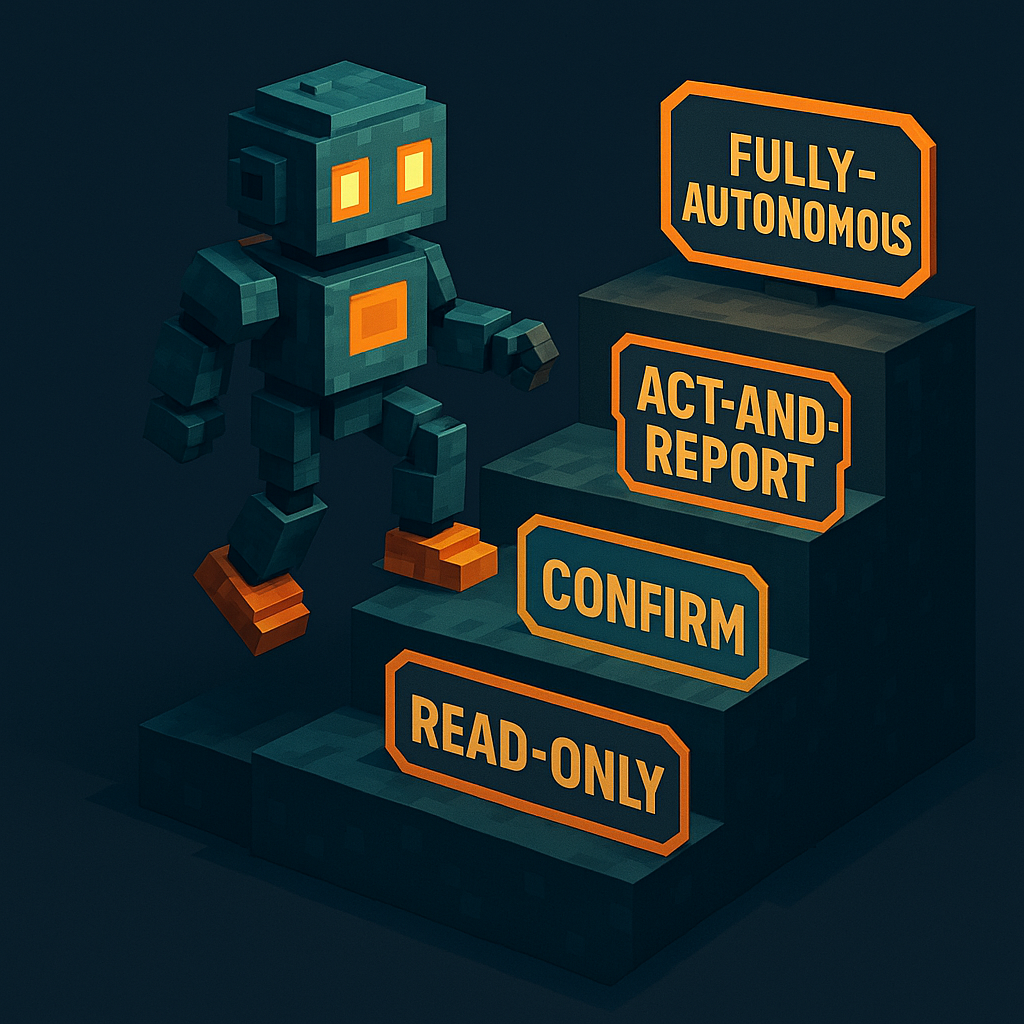

The four-stage ramp

An autonomy ramp is a staged process for expanding an AI agent's capabilities and permissions as it demonstrates reliable behavior. Rather than granting full access at deployment, the ramp starts with constrained scope — read-only access, small budgets, human confirmation — and expands each stage only after the previous stage has been verified. The ramp trades initial velocity for reduced blast radius.

Stage 1: Read-only

The agent can observe, research, and report. No spending capability, no send capability, no write access to any system.

What the agent has: web_search, web_browse, read-only data access

What the agent doesn’t have: email_send, payment_make, any write tool

Budget: Zero

What to verify before advancing: Does the agent’s research match your expectations? Are the sources it cites reasonable? Does it stay within the task scope when given open-ended instructions?

Stage 2: Act-and-confirm

The agent proposes actions; a human approves before execution. The agent drafts emails but doesn’t send them. It identifies purchases but presents them for approval. The agent does the cognitive work; the human makes the final call.

What the agent has: All Stage 1 tools + draft/propose capability Budget: Token budget for research tools only Human approval required: Every action before execution

What to verify before advancing: Do the proposed actions match intent consistently? Are the edge cases surfaced correctly? Does the agent handle ambiguity by asking rather than guessing?

Stage 3: Act-and-report

The agent executes autonomously and reports results. Humans review the log but aren’t in the critical path. Irreversible actions (purchases, sent communications) still have per-action budget caps.

What the agent has: Stage 2 tools + email_send, limited payment_make

Budget: Task-sized with structural ceiling (e.g., $5 for a research task that costs ~$0.30)

Human review: Asynchronous, after task completion

# Stage 3 budget setup

npx atxp fund --agent "researcher" --amount 5.00

npx atxp limits --agent "researcher" --web-search 2.00 --email 0.50What to verify before advancing: Does the agent complete tasks within budget? Are there surprise actions in the log — things it did that weren’t in the instructions? Are costs consistent with estimates?

Stage 4: Fully autonomous

The agent executes and handles exceptions within policy. Humans set policy (spending limits, allowed tool types, escalation rules); the agent operates within it without routine oversight.

What the agent has: Full tool access scoped to its role Budget: Policy-defined, with per-category limits Human involvement: Policy setting, anomaly review, exception handling

Most teams reach Stage 4 for some tasks and not others. High-stakes or irreversible workflows may stay at Stage 3 indefinitely — and that’s fine. The ramp doesn’t require reaching Stage 4 everywhere.

Scoping tools by stage

"Nephew/intern analogy — see how they work before tossing them the keys to everything."

Louis Amira, co-founder, Circuit & Chisel

Louis Amira, co-founder, Circuit & ChiselThe autonomy ramp maps directly to tool access. In ATXP, you can scope exactly which tools each agent account has:

from atxp import AtxpToolkit

toolkit = AtxpToolkit.from_env()

# Stage 1: read-only

stage1_tools = toolkit.get_tools(["web_search", "web_browse"])

# Stage 3: act and report

stage3_tools = toolkit.get_tools(["web_search", "web_browse", "email_send"])

# Stage 4: full scope for this role

stage4_tools = toolkit.get_tools(["web_search", "web_browse", "email_send", "payment_make"])Same account, same API key — different tool access per stage. The agent can’t call tools it hasn’t been given, regardless of what instructions it receives.

What triggers stage advancement

The question isn’t “has the agent been running for long enough?” It’s “has the agent demonstrated reliable behavior at the current stage?”

| Signal | Meaning |

|---|---|

| Task output matches intent consistently | Ready to consider advancement |

| Costs within 20% of estimate | Execution is predictable |

| No out-of-scope actions in log | Agent respects boundaries |

| Edge cases surfaced correctly | Uncertainty is handled well |

| Unexpected actions in log | Stay at current stage |

| Costs higher than estimated repeatedly | Investigate before advancing |

Reversible actions (web searches, draft generation) warrant shorter observation periods. Irreversible actions (purchases, sent emails, deleted data) warrant longer ones. The cost of a false negative (staying at Stage 2 longer than needed) is lower than the cost of a false positive (advancing too early).

npx atxpTool scoping per agent. Structural budget ceilings. Full transaction log for every stage. Financial zero trust → · How to give an agent a budget → · Should I trust an AI agent? →

Frequently asked questions

What is the autonomy ramp for AI agents?

A staged approach: start with read-only access, add act-and-confirm, then act-and-report, then full autonomy. Each stage requires verified reliable behavior before advancing.

Why not give agents full autonomy immediately?

Blast radius. Full autonomy from day one means the first unexpected behavior has unlimited impact. A ramp limits early failures to the permissions granted at each stage.

How do spending limits fit the ramp?

Scale with autonomy. No spending at Stage 1. Task-sized budget with structural ceiling at Stage 3. Policy-defined limits at Stage 4. Financial access expands with demonstrated reliability.

What should I check before expanding permissions?

Actions match intent, costs match estimates, no out-of-scope actions in log, edge cases handled correctly. Reversible actions need less observation time than irreversible ones.

Can different agents in the same stack have different autonomy levels?

Yes — and they should. A researcher might be at Stage 3 while a buyer stays at Stage 2. Tool scoping in ATXP lets you give each agent exactly the access its current stage requires.

Do I have to reach Stage 4?

No. Some workflows should stay at Stage 3 indefinitely. The goal isn’t maximum autonomy — it’s the right autonomy level for the task’s risk profile. Are AI agents safe? →