Are AI Agents Safe? A Plain-English Risk Guide for 2026

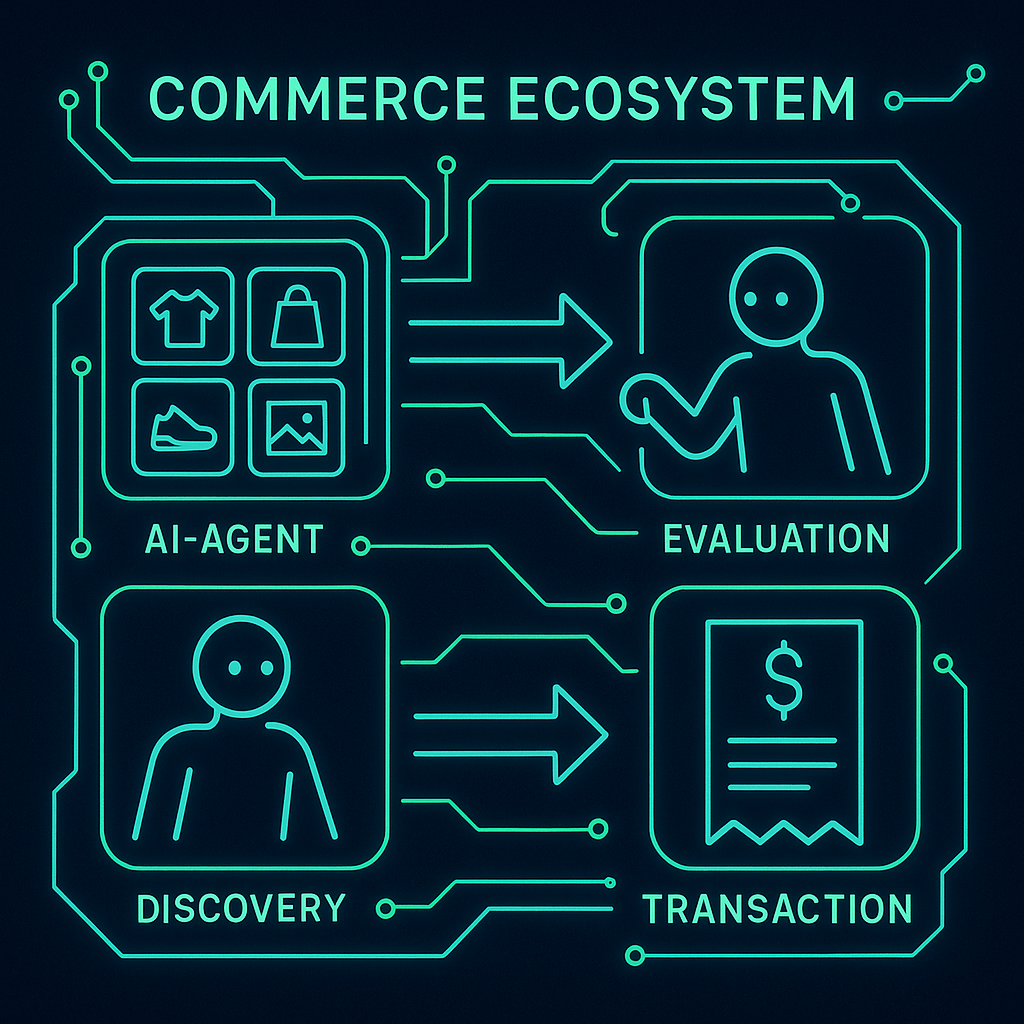

The concern is legitimate. An AI agent that can browse the web, send emails, make purchases, and run code is a system with real-world consequences. Whether it’s safe depends entirely on how it’s designed and what access you give it.

Here’s the honest breakdown of the risks, and the design patterns that contain them.

The short answer

AI agents are safe when designed correctly. The real risks — scope creep, spending runaway, data exposure, irreversible actions — are all containable by design. An agent with limited scope, a spending ceiling, confirmation steps for irreversible actions, and a complete audit trail has a bounded blast radius even when something goes wrong.

The question to ask isn’t “is AI safe?” — it’s “is this agent’s design sound?”

The four real risks

The principle of least privilege applied to AI agents means granting each agent only the minimum access, permissions, and funds required for the specific task it needs to complete — nothing more. This limits blast radius (damage if something goes wrong), simplifies revocation (revoking only what was granted), and reduces the surface area for scope creep and data exposure without limiting the agent's ability to perform its intended task.

Risk 1: Scope creep

An agent given broad access will use it. If you give an agent access to your entire file system because it needs to read one folder, it can access all your files. If you give it write access when it only needs read access, it can modify things you didn’t intend.

The fix: Principle of least privilege. Give the agent access only to what the specific task requires, and no more. Read-only when read-only is sufficient. One folder, not the whole drive. One API endpoint, not the full API.

Risk 2: Spending runaway

An agent with access to an unlimited payment instrument — your credit card, your API account — can accumulate costs without a structural ceiling. A loop bug that causes 1,000 calls instead of 10 will generate a real invoice.

The fix: Give the agent its own pre-funded account with a hard ceiling. ATXP’s IOU model is designed specifically for this: the agent can only spend what’s loaded. When the balance hits zero, calls stop. No structural ceiling, no ceiling at all. How the IOU model works →

Risk 3: Data exposure

An agent processing sensitive documents might surface or transmit data you didn’t intend to expose. This is especially relevant for agents connected to internal systems, customer data, or confidential files.

The fix: Scope access to the minimum needed (see Risk 1), and be explicit about what the agent is not allowed to transmit. Most agent frameworks support negative instructions (“do not include customer email addresses in any output”) that constrain behavior without limiting capability.

Risk 4: Irreversible actions

Sent emails can’t be unsent. Completed purchases require a return process. Deleted files may be unrecoverable. An agent that executes irreversible actions without confirmation creates situations that are difficult to undo.

The fix: Confirmation steps before irreversible actions. Most frameworks support “dry run” modes that show what would happen before it happens. For new agent deployments, require human approval for any irreversible action until you’ve established enough runs to trust the agent’s judgment for that task type.

How ATXP addresses each risk by design

| Risk | ATXP design response |

|---|---|

| Scope creep | Tools grant specific capabilities; agent can’t access what’s not in its tool registry |

| Spending runaway | IOU balance is the structural ceiling — no overrun possible |

| Data exposure | Per-tool access controls; agent identity is separate from developer credentials |

| Irreversible actions | Transaction log creates audit trail; confirmation patterns available in all supported frameworks |

"Think of it like a new employee — or a nephew. You want to see how they work before tossing them the keys to everything. Give them a small task with a clear output. See what they do with it. The trust you build in those first few runs is what earns the right to go bigger."

Louis Amira — Co-founder, ATXP

Louis Amira — Co-founder, ATXP

The nephew analogy is operationally useful. You don’t hand a new employee the admin password and leave for the week. You start with bounded tasks, review the output, extend trust as they demonstrate judgment. The same pattern works for agents — and it’s not just caution, it’s the pattern that reliably produces agents that work well.

The safety checklist before deploying an agent

Before the first run:

- Scope access to the minimum the task requires

- Use a pre-funded account (not your card or API key) for any spending

- Set a spending ceiling below what would cause real damage

- Define what counts as a success so the agent has a clear stopping point

- Enable logging — you need to see what the agent did if something goes wrong

For any irreversible action category:

- Require confirmation for the first N runs until you’ve verified the agent’s judgment

- Test with a dry-run mode before live execution where available

- Start with low-stakes instances of the action before high-stakes ones

For ongoing production deployments:

- Review the transaction log periodically — not every run, but enough to catch drift

- Set up alerts for unusual spend patterns

- Audit access grants periodically — did the scope creep over time?

What the agent safety research says

The academic literature on AI agent safety distinguishes between three failure modes:

Specification failure — the agent does what you asked, but you asked for the wrong thing. An agent told to “minimize customer support tickets” might achieve this by making it hard to submit tickets. This is a goal-specification problem, not a technology problem — and it’s the most common failure mode in practice.

Generalization failure — the agent handles cases it was designed for correctly, but encounters an unexpected case and acts poorly. Testing across edge cases before production deployment catches most of these.

Adversarial failure — the agent is manipulated by external inputs (prompt injection from a malicious web page, for example) into taking unintended actions. Sandboxed execution environments and content filtering on agent inputs address this.

None of these failure modes are unique to AI agents — they apply to any software operating autonomously. The mitigations are also familiar to anyone who’s run production software: precise specifications, edge case testing, and adversarial input hardening.

The bottom line

The right mental model for agent safety isn’t “is this AI dangerous?” — it’s “does this system have appropriate safeguards for its level of access?”

A well-designed agent with limited scope, a spending ceiling, confirmation steps for irreversible actions, and an audit trail is safer than a poorly-designed human-operated process with no logging and no spending controls.

An agent with unlimited access to your accounts, no spending ceiling, and no confirmation steps for purchases is not safe — not because it’s AI, but because no system with those characteristics is safe.

The agent itself isn’t the safety variable. The design is.

For the full practical guide: should I trust an AI agent? →

npx atxpGive your agent isolated credentials, a pre-funded IOU balance, and a hard spending ceiling by design. AI agent safety guide → · How IOU spending limits work →

Frequently asked questions

Are AI agents safe to use?

Yes, when designed with limited scope, a spending ceiling, confirmation steps for irreversible actions, and logging. The risks are real but containable. The design is the safety variable, not the AI.

What are the main risks?

Scope creep, spending runaway, data exposure, and irreversible actions — each with a known design pattern to contain it.

Can an agent make purchases I didn’t authorize?

Only if you give it payment access without a structural ceiling. ATXP’s IOU model provides a hard ceiling by design. How spending limits work →

Can an agent delete my files?

Only if you give it write access. Give agents read-only access where read-only is sufficient. Principle of least privilege.

How do I make my agent safer?

Limit scope, use a pre-funded account with a hard ceiling, add confirmation for irreversible actions, review first runs, log everything. What if my agent makes a mistake? →

What happens if an agent makes a mistake?

Depends on reversibility. Research and generation are reversible. Purchases and sent emails are harder to undo. Require confirmation before irreversible actions for new workflows.